Earlier this month, I wrote about using SPSD to manage SharePoint custom solutions automation and using that tool to manage the Application Life Cycle management as well. I talked about writing custom extensions as per your need.

In this article I’ll talk about one such extension which is used to create new User Profile Properties that may be required by your customization on SharePoint platform. Again, I expect that you are familiar with capabilities of SPSD and you have read the documentation of SPSD before reading further. However, if you are have not created your own extension before or want to use one of the existing extension, this blog may serve as a reference for you. Feel free to leave comments if steps are unclear.

My goal is to create an SPSD extension which extends user profiles with custom properties but I don’t want to hard-wire the properties in the script. I want to use SPSD’s ability to pass XML when an extension executes and want to write a script (extension) that reads the chunk of XML (user profile specifications) and creates the user profile properties as specified. The beauty of this approach is that I can use this SPSD extension in many many other projects where I can use SPSD for deployments.

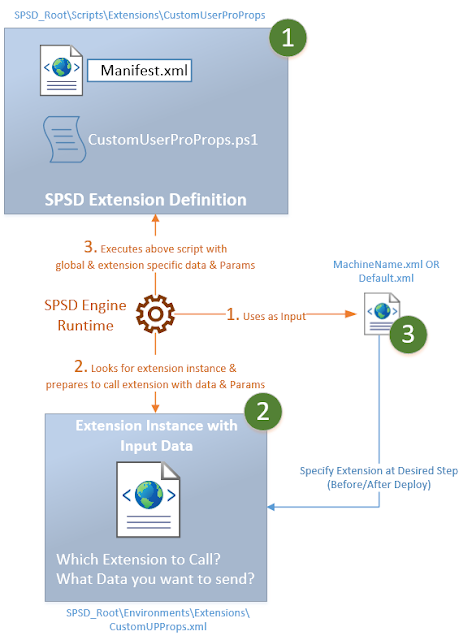

The figure below are high level steps. Steps in GREEN color are related to creating extension and specifying when to call the extension in the deployment process. Steps in ORANGE explains what happens when the SPSD is run for deployment. Again there are many things that happen when SPSD’s deploy.bat is run but scope of the figure below is the extension itself.

Here are the steps to create a new extension, define an instance of it and call the instance from {machineName}.xml or the default.xml.

1a. Create a folder under \Scripts\Extensions folder as shown below and create a .ps1 file and implement logic to create custom user profile properties. As a convention, name of the folder shall be same as name of the .ps1 and extension name. At minimum, you would create one PowerShell function, the signature of which is governed by SPSD. Copy of the code is given below.

[System.Reflection.Assembly]::LoadWithPartialName("Microsoft.Office.Server")

[System.Reflection.Assembly]::LoadWithPartialName("Microsoft.Office.Server.UserProfiles")

if ($data -eq $null) {

Log -message "No Properties are specified in the configuration. User Profile Properties will not be created." -type $SPSD.LogTypes.Warning

return;

}

#$site = Get-SPSite $vars["SiteUrl"] # Get-SPSite

#$context = Get-SPServiceContext $site # Get The service Context

$profileApp = @(Get-SPServiceApplication | ? {$_.TypeName -eq $vars["UserProfileApplicationName"]})[0]

$context= [Microsoft.SharePoint.SPServiceContext]::GetContext($profileApp.ServiceApplicationProxyGroup, [Microsoft.SharePoint.SPSiteSubscriptionIdentifier]::Default)

$context

$upcm = New-Object Microsoft.Office.Server.UserProfiles.UserProfileConfigManager($context);

$ppm = $upcm.ProfilePropertyManager

$cpm = $ppm.GetCoreProperties()

$ptpm = $ppm.GetProfileTypeProperties([Microsoft.Office.Server.UserProfiles.ProfileType]::User)

$psm = [Microsoft.Office.Server.UserProfiles.ProfileSubTypeManager]::Get($context)

$ps = $psm.GetProfileSubtype([Microsoft.Office.Server.UserProfiles.ProfileSubtypeManager]::GetDefaultProfileName( [Microsoft.Office.Server.UserProfiles.ProfileType]::User))

$pspm = $ps.Properties

foreach($prop in $data.SelectNodes("//Property")) {

$property = $pspm.GetPropertyByName($prop.Name)

if ($property -eq $null)

{

$DefaultPrivacy=$prop.DefaultPrivacy

$PrivacyPolicy=$prop.PrivacyPolicy

$coreProp = $cpm.Create($false)

$coreProp.Name = $prop.Name

$coreProp.DisplayName = $prop.DisplayName

$coreProp.Type = $prop.Type

$coreProp.Length = [Int]::Parse( $prop.Length)

$cpm.Add($coreProp)

$profileTypeProp = $ptpm.Create($coreProp);

$profileTypeProp.IsVisibleOnEditor = $prop.IsVisibleOnEditor;

$profileTypeProp.IsVisibleOnViewer = $prop.IsVisibleOnViewer;

$ptpm.Add($profileTypeProp)

$profileSubTypeProp = $pspm.Create($profileTypeProp);

$profileSubTypeProp.DefaultPrivacy = [Microsoft.Office.Server.UserProfiles.Privacy]::$DefaultPrivacy

$profileSubTypeProp.PrivacyPolicy = [Microsoft.Office.Server.UserProfiles.PrivacyPolicy]::$PrivacyPolicy

$pspm.Add($profileSubTypeProp)

Log -message ([String]::Format( "Profile Property {0} was created successfully.",$prop.Name )) -type $SPSD.LogTypes.Normal

}

else

{

Log -message ([String]::Format( "Profile Property {0} already exist. Skipped.", $prop.Name)) -type $SPSD.LogTypes.Warning

}

}

}

1b. Define a manifest.xml file, the structure of which is governed by SPSD. Basically, it defines the Type (a logical name of extension – by convention, it can be same as folder name), description and name of the .ps1 file that we created in above step.

<SPSD>

<Extensions>

<Extension>

<Manifest>

<Type>UserProfileCustomProperty</Type>

<Description>

Use this extension to create custom user profile properties

</Description>

<Version>1.0.0.0</Version>

<Script>CustomUserProPropsUserProfileCustomProperties.ps1</Script>

<Enabled>true</Enabled>

</Manifest>

</Extension>

</Extensions>

</SPSD>

2. Under \Environments\Extensions folder, create an XML file as per the structure expected by SPSD – this becomes an instance of extension. Basically, here you will specify ID of instance, Type of extension, Event to specify when should this extension instance be called at run time (For instance AfterDeploy, BeforeDeploy), parameters and Data. The Parameters you specify here are available as an input. Under the Data Node you can specify an arbitrary XML which your PowerShell script can understand. Example shown below the figure.

<SPSD Version="5.0.1.6438">

<Extensions>

<Extension ID="CreateUserProfileProperties" Type="CustomUserProProps" >

<Events>

<Event Name="AfterDeploy">Create-CustomUserProfileProperties</Event>

</Events>

<Parameters>

<Parameter Name="SomeParam">Some Value</Parameter>

</Parameters>

<Data>

<!--

DefaultPrivacy - Values

"Public" Gives everyone visibility to the user's profile properties and other My Site content.

"Contacts" This object, member, or enumeration is deprecated and is not intended to be used in your code. Limits the visibility of users' profile properties, and other My Site content, to my colleagues.

"Organization" This object, member, or enumeration is deprecated and is not intended to be used in your code. Limits the visibility of users' profile properties, and other My Site content, to my workgroup.

"Manager" This object, member, or enumeration is deprecated and is not intended to be used in your code. Limits the visibility of users' profile properties, and other My Site content, to my manager and me.

"Private" Limits the visibility of the user's profile properties and other My Site content to the user only.

"NotSet" The privacy level for user's profile properties and other My Site content is not set.

PrivacyPolicy - Values

"Mandatory"- Makes it a requirement that the user fill in a value.

"OptIn" Opt-in to provide a privacy policy value for a property.

"OptOut" Opt-out from providing a privacy policy value for a property.

"Disabled" Turns off the feature and hides all related user interface.

Length - Max 3600

-->

<Property Name="YourPropName" DisplayName="Prop DisplayName" Type="string" Length="3600" DefaultPrivacy="Private" PrivacyPolicy="OptIn" IsVisibleOnEditor="OptIn" IsVisibleOnViewer="$true"></Property>

</Data>

</Extension>

</Extensions>

</SPSD>

3. Modify {TargetMachineName}.xml or “Default.xml” file under \Environments folder and provide a reference to above extension instance file. Example shown below the figure.

<!-- AFTER WSP DEPLOYMENTS-->

<Extension ID="CreateUserProfileProperties" Type="CustomUserProProps" FilePath="Extensions\CustomUPProps.xml" />

</Extensions>

Now, let’s understand what happens at runtime, at this time you should be able to connect the dots (steps in orange color above):

R1. When SPSD’s batch file (typically deploy.bat) file is run, it will read the {targetmachine}.xml or default.xml (if machine specific file is absent) and start to do whatever is specified in there, for reference check SPSD’s documentation. At this point SPSD would make an inventory of things it needs to do and determine the order in which it should execute the deployment.

R2. Whenever the time comes for our extension (as dictated by step 2 and 3 above), SPSD will figure out which method of the PowerShell script to call and what data to pass (as defined in step 2 above)

R3. Finally, the extension is executed by SPSD and messages are recorded for reference purpose.

That’s it for now! Feel free to leave comments.